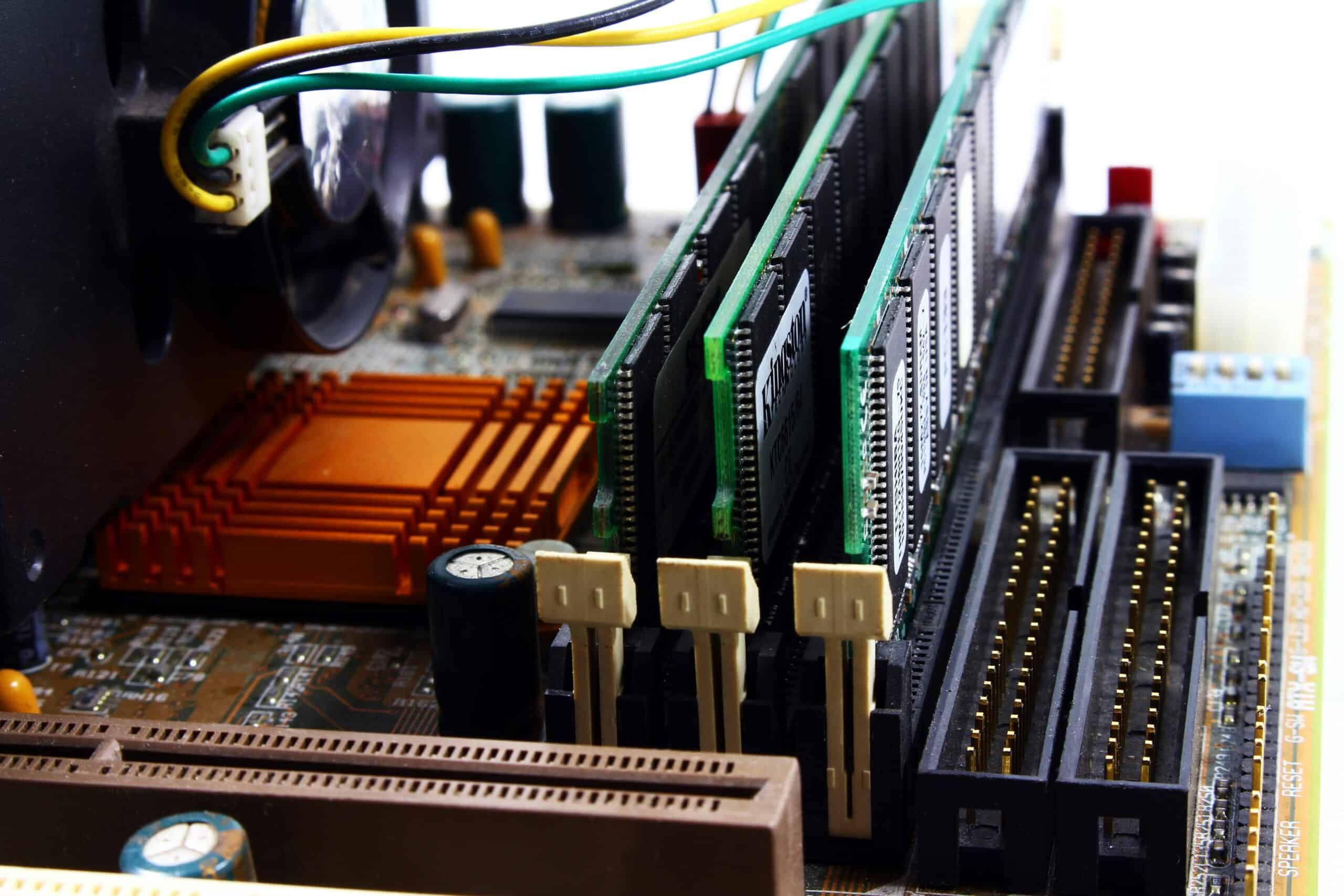

Hardware failure is among the top causes of data loss, resulting in costly operational disruptions, financial losses and reduced productivity.

But while some system outages are inevitable, there are effective ways to fully prevent data loss from hardware failure – even if all your servers are toast. In this post, we explore the most important strategies.

Hardware Failure: Common Causes

Hardware failure can occur for several different reasons, and these issues are well known even among manufacturers. The most common causes include:

- Aging hardware: Storage drives naturally degrade over time, especially hard disk drives (HDDs), which have moving parts.

- Power issues: Sudden surges, fluctuations or loss of power can lead to hardware damage and data corruption.

- Environmental elements: Heat and humidity are common causes of hardware failure, which is why spaces for IT infrastructure must have proper climate regulation.

- Physical hardware damage: Physical damage, such as shock and vibration during installation or operation, can cause hardware to fail.

- Human error: Accidents during configuration, maintenance and replacement can increase the risk of failure.

Quick Summary

2025 Hardware Failure Snapshot

- Annual Failure Rate: 1.36% across all drive models (up from previous years).

- Most at Risk: 10TB drives currently show a 5.23% failure rate.

- Safest Bet: 16TB drives currently show <1% failure rate.

- The "Danger Zone": Drives aged 3–5 years (the “bathtub curve” spike).

- Reality Check: In a company with 100 drives, statistically ~2 will fail this year.

Source: Backblaze 2025 Drive Stats

Levels of Severity

When hardware and software suddenly stop working, three different levels of data loss can occur:

- Minor losses: Any data in transit to or from the server is usually lost because the system fails before saving it.

- Widespread data corruption: More serious system errors can corrupt any new or modified data from the last several minutes or even hours, resulting in a much greater loss of data.

- Complete data loss: The most catastrophic malfunctions can render a drive inoperable or essentially wipe out all data, thus requiring a full data restore.

Unpatched software or operating systems and aging disk drives are the most common culprits for the biggest data catastrophes. Most hard drives that fail do so within three years, on average. That means as every year passes, there’s a greater chance of a failure that could devastate your data storage.

Key Takeaway

Hardware Failures Rarely Cause the Longest Downtime — Recovery Issues Do

Most organizations assume the hardware failure itself will be the biggest problem. In reality, the longer outage often happens during recovery. Backups may exist but haven’t been tested, restoration workflows may be unclear, and infrastructure dependencies may slow the process. This is why modern disaster recovery strategies focus not just on backup creation, but on automated recovery testing and validated recovery workflows.

Hardware Failure vs. Other Causes of Data Loss

System failures aren’t the only data killers to consider. The most common causes of data loss include:

- Hardware failure

- Software errors

- Malware and viruses

- Accidental data deletion

- Malicious data deletion

- Physical hardware damage

- Misplaced or stolen devices

- Power failures

- Network failures

- Overwritten data

- Expired software licenses (SaaS application data)

Each of these issues has the potential to cause a disaster, but some are more likely than others.

Human error and hardware failure make up the bulk of business data loss. According to Verizon’s 2024 report, 68% of all data breaches involve the human element, such as compromised credentials or accidental file deletion. While natural disasters tend to get the biggest headlines, they don’t happen every day. Mistakes and system malfunctions do.

How to Prevent Data Loss from Hardware Failure

Below are the most effective strategies businesses use today to prevent data loss from hardware failure and ensure rapid recovery.

Further below, we also include a planning framework that turns these steps into a structured, repeatable process.

For a broader recovery roadmap, see our business continuity planning guide, which explains how to document recovery roles, response procedures, and testing processes as part of a structured resilience strategy.

Expert Insight

Dale Shulmistra — Data Protection Specialist, Invenio IT

Datto Blue Partner • Business Continuity & Disaster Recovery Specialist

“The biggest mistake I see isn't necessarily a lack of backups. It’s that many companies use a ‘set it and forget it’ mentality. They aren’t proactively testing their backups or their recovery workflows. So when a large data-loss event happens, such as hardware failure, they suddenly realize the backups aren’t viable. This makes the disruption far longer and more costly — yet it can be prevented with better disaster recovery testing automation.”

1) Begin with a Business Continuity Plan

A business continuity plan (BCP) serves several purposes, but its most important objective is ensuring that your business can continue operating after a disruptive event.

Your BCP should outline the steps and systems for responding to all types of disasters, ranging from hardware failures and system malfunctions to fires and floods. Think of this document as a recovery roadmap. It should state exactly how your business will attempt to recover from data loss and the procedures for getting everything back online. It should also identify preventative measures that help the company avoid hardware failure and data loss.

Additionally, the document should contain a thorough risk assessment and a business impact analysis. These will help identify your potential weaknesses and prioritize the most vital elements of your continuity planning.

2) Back Up Your Data

A robust data backup system is arguably the best way to prevent data loss from hardware failure because it ensures you can restore any files that have been destroyed.

You should regularly back up every kind of data your business handles, including:

- Applications and software data

- Operating system data

- Databases

- Emails

- Information assets (all company files)

- Customer relationship management (CRM) data

- Virtual machines

- Cloud & SaaS data

- Endpoint data

Data backups are a critical failsafe, especially in the age of ransomware. With frequent restore points, backups give you the ability to prevent a file from being accidentally erased or damaged in a way that makes it irrecoverable.

Your backups should be reliable, complete and quickly recoverable. Today, that means deploying a 360-degree business continuity and disaster recovery (BC/DR) solution like Datto SIRIS. (Check Datto SIRIS pricing for your organization.)

3) Replicate Data to the Cloud

In some instances of hardware failure, you may find that your local backups are unreliable too. What now? The solution is not to rely solely on one type of backup.

Today’s best BC/DR systems use an approach called hybrid cloud backup, which backs up data on site and in the cloud. If your local hardware experiences a catastrophic failure, you can turn to plan B. You’ve still got a backup in the cloud, allowing you to access all your files in seconds.

4) Virtualize Data Backups

Backing up data to the cloud is a smart step, but it’s even better if you can virtualize those backups. With virtualization, you can boot up the backup as a virtual machine and continue using your critical applications until the on-site systems are ready to go.

Some BC/DR solutions, even those designed for small businesses like Datto ALTO, store your backup as an image-based, fully bootable virtual machine. You can complete this virtualization via the on-site BC/DR appliance, the cloud or with a combination of both – a process known as cloud virtualization. If your on-premise infrastructure fails, you can still virtualize your backup from anywhere.

Unlike a full data recovery, which can take longer, virtualization lets you access all your data and applications in seconds. Think of the virtual machine like a complete Windows operating system running within a single window of your computer, where you can continue to run all the applications that power your business. Even better, the system will still back up any new or modified data while you use this virtual environment.

5) Set Recovery Point Objectives (RPOs)

Some data loss is inevitable, but you can limit it with a documented backup strategy. To prevent loss of work on a computer, it is essential to set a recovery point objective for your backups. Your recovery point objective (RPO) dictates how old your data can be if you need to recover a backup. In other words, it sets how frequently you need to perform backups to avert a major disruption from data loss.

Let’s say your RPO for critical files and application data is one hour. In that case, you should perform new backups every 60 minutes, at minimum. In the event of drive failures, you’d only lose a maximum of one hour’s worth of data.

Your RPO is based on several factors, most notably the business impact of prolonged data loss. As such, determine your RPO during the business impact analysis phase of your business continuity planning.

6) Test Your Backups

Having a robust data backup system is the most important way to prevent data loss from hardware failure, but you need to test those backups to confirm that they’re viable. Don’t assume that they’ll work when the time comes, especially if you’re relying on older incremental backup processes, which are notorious for failure during the recovery process.

Spending hours piecing together a backup is a nightmare scenario for IT managers who are racing to restore data after a major server failure. If you want to avoid the problems with traditional incremental backups altogether, consider moving to a backup system that eliminates dependency on the incremental chain.

Backup failures happen surprisingly often, so testing them for integrity and bootability is crucial. Ideally, you’ll use an automated process that alerts your IT teams to any issues.

7) Patch and Update Your Systems

Keep in mind that you can prevent some hardware failure by identifying potential vulnerabilities, such as outdated system files and firmware.

Our advice? Patch your systems — regularly.

No matter whether you’re running a small business with a few desktops or an enterprise company with sprawling infrastructure across the globe, you should be fully aware of all the hardware and software you’re using on every machine. More than that, you should install updates for those systems as soon as they become available, assuming they’re not automated.

Patches exist for a reason, often to resolve critical stability problems and other vulnerabilities that leave your systems at risk for malfunction. Updating your systems proactively and on a regular schedule is easy. Recovering from a major data loss after a system malfunction, on the other hand, is rarely so simple.

8) Replace Aging Hardware

The risk of hardware failure increases as hardware ages. You can reduce the risk of data loss by replacing those components before they have the chance to fail. This is especially true for conventional spinning disk drives, whose parts are constantly moving and naturally degrade over time.

Why wait until the drives and the data saved on them are suddenly gone? You know you need to replace them every few years, so adopt a preemptive strategy. Hot swap drives are increasingly common these days, which makes it even easier to replace old drives without upgrading to completely new servers.

Follow the manufacturer’s recommended replacement timeline to determine how often you need to upgrade. These recommendations tend to max out at about five years because it becomes exponentially more expensive for manufacturers to support aging servers.

The same goes for all your hardware: know how often to replace each component and follow those guidelines accordingly to prevent unexpected failure.

9) Properly store and maintain hardware

The physical environment in which your hardware operates plays a significant role in its longevity. Be sure to maintain a stable environment for all servers, storage devices and other equipment.

- Ensure proper ventilation: Overheating is a major cause of hardware failure. Ensure that devices have adequate airflow and are not operated in excessively hot environments.

- Clean hardware: Dust and debris can cause overheating and damage to internal components. Regularly cleaning your computers and servers is an effective preventative measure.

- Use uninterruptible power supplies (UPS): A UPS provides backup power in the event of an outage, protecting against power surges and fluctuations, which can damage hardware and cause data loss.

Example Data Loss Prevention Framework

The tips above provide a basic foundation for understanding how to prevent data loss, but true data resilience requires a more structured framework. At Invenio IT, we recommend organizing hardware strategy into these three critical pillars:

1. Identify & Assess (The Strategy Layer)

Before you can prevent loss, you must know what is at risk. This stage involves auditing your environment to eliminate “blind spots.”

- Inventory Critical Assets: Catalog all servers, NAS devices, and endpoints.

- Define Your RPO: Determine your Recovery Point Objective—the maximum amount of data (in time) your business can afford to lose.

- Monitor Lifecycle: Track the age of every drive. Know when each one was implemented and when it should be replaced.

2. Protect & Maintain (The Prevention Layer)

In this stage, you will physically and digitally harden your hardware to extend its lifespan and prevent mid-day crashes.

- Environmental Control: Ensure proper ventilation and use uninterruptible power supplies (UPS) to guard against surge-induced failure.

- Routine Patching: Keep firmware and OS versions current to prevent stability-related hardware hangs.

- Physical Hygiene: Regular cleaning to prevent dust-driven overheating.

3. Verify & Recover (The Resilience Layer)

Since 100% hardware reliability is impossible, your framework must include a “fail-forward” mechanism.

- Redundant Backups (3-2-1 Rule): Keep three copies of data, on two different media, with one offsite.

- Automated Testing: Routinely test your backups to ensure they are viable and recoverable. Automate this recovery testing process according to a specific schedule.

- Rapid Restore Protocols: Have a documented plan to virtualize your environment instantly if a primary server drive fails.

Guide

Which Hardware Failure Prevention Strategy Matters Most?

| If You Are… | Prioritize This | Why |

|---|---|---|

| A Small Business | The 3-2-1 Backup Rule | A single server crash can wipe out your entire business if an off-site copy doesn’t exist. |

| A Growing Company | Hardware Lifecycle Tracking | As infrastructure scales, the statistical probability of random drive failure rises significantly. Consider replacing drives before year 5. |

| An Enterprise | Instant Virtualization (BCDR) | When downtime costs thousands of dollars per minute, waiting hours to restore a failed physical server is not an option. |

| In Manufacturing / Industrial | Environmental Controls & UPS | Power surges, dust, and overheating can destroy physical servers much faster than age alone. |

Real-World Scenario: The Friday Afternoon Server Crash

To understand the true cost of hardware failure—and how proper planning can limit its impact—consider a real-world incident involving a mid-sized manufacturing client of Invenio IT. This example highlights the difference between a traditional backup strategy and a true Business Continuity and Disaster Recovery (BCDR) approach.

The Scenario

At 4:30 PM on a Friday, the client’s primary SQL server experienced a critical RAID controller failure. The server stored approximately 2TB of production data required to support the company’s weekend manufacturing shifts.

The failed RAID controller used proprietary hardware, and a compatible replacement could not be sourced until Monday morning. Without a continuity solution in place, the organization faced the possibility of the server remaining offline for the entire weekend.

The Traditional Backup Approach

With a standard backup-only strategy, recovery would have required several steps:

Waiting for replacement hardware to arrive

Rebuilding the server storage system

Restoring the SQL server and production database from backup

Reconfiguring the application environment

Even under ideal conditions, this process could have resulted in up to 72 hours of downtime, followed by several additional hours to restore and validate systems. In practice, this would have halted weekend production entirely.

The BCDR Solution

Because the client had implemented a BCDR solution, the recovery process followed a different path.

Using Instant Virtualization, the server’s backup image was launched directly on the local BCDR appliance. Instead of waiting for the physical server to be repaired, the production workload was temporarily run as a virtual machine directly from the backup device.

The Timeline

4:30 PM – Server failure detected Within minutes – Instant Virtualization initiated ~15 minutes later – SQL server fully operational as a virtual machine

Total downtime: approximately 15 minutes.

The Financial Impact

By avoiding a weekend production shutdown, the client prevented an estimated $15,000 in emergency IT labor and lost production revenue, not including the operational disruption that would have affected staff schedules and manufacturing output.

The Outcome

Employees completed their Friday shift normally, and weekend production continued without interruption. The failed hardware was replaced the following week during a scheduled maintenance window, allowing the workload to migrate back to the physical server with minimal impact on business operations.

Frequently Asked Questions (FAQ) about Preventing Data Loss

To help you find solutions to the most pressing data loss issues as quickly as possible, we put together answers for some of the most common questions we hear from our clients.

1. What are some different types of data loss prevention?

Three important methods of data loss prevention are data backups, system patching and routine hardware replacement. These methods help prevent data loss from occurring by ensuring that compromised data can be restored after incidents such as hardware failure, accidental deletion, malware or cyberattack.

However, these methods should not be confused with data loss prevention solutions (DLP), which are primarily security solutions designed to prevent sensitive data from being shared with unauthorized parties.

2. What are the most common causes of data loss?

Hardware failure is among the most common causes of data loss. This includes server outages due to failing disk drives and data corruption in endpoint devices, such as laptops. Another frequent reason for data loss is human error, such as accidental deletion, overwriting data or taking actions that lead to data breaches. Among cyberattacks and malware, ransomware attacks are the leading cause of data loss, affecting more than 62% of global businesses in 2025.

3. How can you prevent data loss due to hardware failure?

The best way to prevent data loss due to system failure is to back up your data frequently. Nearly every organization loses data because of hardware failure, but having dependable backups ensures that you can recover the data even if you can’t retrieve it from the primary storage device. To prevent a system failure from occurring, continually monitor device performance and replace aging hardware before it fails. Regularly updating and patching systems will also help to eliminate vulnerabilities that could lead to system failure.

4. What is an example of data loss?

The term data loss can refer to any event that results in deleted, damaged or missing data. A common example is when hardware failure destroys files that a business needs to operate. Additional examples of data loss include accidentally deleted files, data destroyed by malware, corrupted files and maliciously deleted data.

5. What is a good way to protect your data in case your computer malfunctions?

A good way to protect your data from being permanently destroyed by malfunctioning hardware or software is to maintain frequent data backups. Routine backups ensure that you can restore your critical files, applications and O/S data, even if your computer malfunctions.

6. What are the early warning signs of a failing hard drive?

Watch for frequent system crashes, mysteriously corrupted files, and unusually slow file-loading times. If you access the drives close-up, unusual clicking or grinding noises can also be a warning sign.

7. Can data be recovered from a physically damaged hard drive?

Yes, but it often requires expensive data recovery services. Software tools cannot fix broken internal components. To avoid this costly and uncertain process, maintain a strict 3-2-1 backup strategy, keeping copies of data stored in different locations and devices.

8. What is the difference between RAID redundancy and data backup?

RAID protects against a single drive failure by mirroring data across multiple disks to keep a server running. However, it is not a true backup. If data is corrupted, deleted or hit by ransomware, RAID will instantly replicate that damage across all drives. You still need additional backups, ideally off-site.

Conclusion

To prevent data loss from hardware failure, businesses must regularly back up their data to a secondary storage location, such as a dedicated local backup device, cloud storage system or a combination of both. While some hardware failure can be prevented by regularly updating and replacing aging components, only data backups provide a complete failsafe against permanent data loss.

Prevent Data Loss at Your Business

See how your organization can prevent data loss from hardware failure and other common causes by leveraging dependable BC/DR solutions from Datto. Explore Datto backup solutions or schedule a call with one of our data protection specialists at Invenio IT for more information. You can also reach us by calling (646) 395-1170 or emailing success@invenioIT.com.